Cloud Cost Management Journey

I joined Collectors almost a year ago as a Director of Platform Engineering. My top priority was supporting Project Mercury, the codename for our Collectors Vault project and one of the biggest launches in Collectors’ history. As part of Project Mercury, we built a one-stop-shop platform for collectibles by providing grading services, “a new” marketplace, and “secure storage (Vault)” under one platform. We had a very tight timeline to have this ready and delivered by the 2022 National Sports Collectors Convention.

Cost optimization was not the priority during this project; timing and product launch was. The Platform Engineering team was building a platform on AWS for all App teams to consume and help with the launch. Kudos to all the teams who worked extremely hard and launched the project successfully without any significant hiccups/outages.

After the launch, Cloud cost management quickly became a priority as the cost grew rapidly with all new project launches and previous projects where cost was not analyzed regularly. To manage cost effectively, having near real-time visibility of cost and usage information was essential. We did a proof of value (POV) on multiple tools and selected Vantage as our cost management solution.

In this blog, I’ll walk you through the best practices we adopted to optimize Cloud cost on AWS.

Forecasting and Budgeting

Forecasting and budgeting are essential steps in optimizing cost; we needed to plan and set expectations around cloud costs for our projects, applications, and more. We established a yearly budget for our Cloud cost based on annual/monthly trends. We decided to revisit this monthly at the Platform Engineering level and quarterly at the leadership level to adjust, considering new workloads and applications. To achieve better results, we created a list of all active AWS accounts with their owners and tied it to Vantage. This helped us quickly explain cost overages.

Right-Sizing

We have legacy apps running on AWS prioritized based on lift and shift, and performance. The new infrastructure we were creating was also based on performance and timing rather than cost, making right-sizing an interminable task.

We streamlined this by right-sizing instances that provided the highest value first. In some cases, we got over a 50% reduction by right-sizing instances. After updating all high-value tickets, we made this an ongoing cadence where insights and anomaly alerts from Vantage helped lower the infrastructure cost by right-sizing instances based on the lowest resource utilization that met the application’s technical requirements.

Turn the lights off when you are not at work

At least 70% of the monthly hours are nights and weekends. Since we don’t have off-shore teams, we can drastically reduce the cost of our non-production resources by turning them off during off hours.

Our teams are constantly innovating, POC’ing, and looking for new ways to help our customers. We also utilize cost-intensive instances for running our AI/ML models.

We utilize AWS Systems Manager – resource scheduler to shut down instances on schedule. This helps us reduce costs by over 40% on some machines.

Savings Plan

AWS Savings Plan is a flexible pricing model that can help reduce On-Demand prices in exchange for a one-year/three-year hourly spend commitment on Compute. This is very effective if appropriately utilized. We ran all our infra on-demand before setting up a savings plan. AWS savings plan helped us reduce our overall AWS spend by over 10%. Vantage has a great dashboard that tracks how much we run on-demand vs. on the Savings plan. We monitor this monthly and look for opportunities to purchase other savings plans, as savings plans can be stacked.

Reserved Instances

Reserved Instances (RIs) were an excellent counterpart for reducing the cost of infrastructure not covered under a savings plan. RIs are a great way to minimize risks, manage budgets predictably, and comply with policies requiring longer-term commitments. We use RIs for Relational Database Services (RDS) and OpenSearch, which helped reduce our Cloud DB and OpenSearch costs by 30%.

Kubecost for Elastic Kubernetes Service (EKS)

As part of Platform Engineering’s mission: “Enable developers to build reliable applications with speed and quality,” we needed a tool to provide real-time cost visibility on the performance of applications. After looking at various tools, we choose Kubecost for optimizing cost on our EKS clusters.

Kubecost is designed to monitor resource utilization by the cost associated with namespaces and provide configuration recommendations to reduce overage. Kubecost gives us custom recommendations based on our environment and behavior patterns. Kubecost automatically generates insights we can use to save 30-50% or more on our Kubernetes infra spend.

The Path To Efficiency

After only a few months, our Cloud Optimization journey has led to 20-40% savings. Hopefully, these methods will be helpful to you and your organization. I want to conclude that reviewing these best practices is not a one-time activity but an ongoing process and is not the responsibility of one team but the whole organization. Good luck with your own optimization journey!

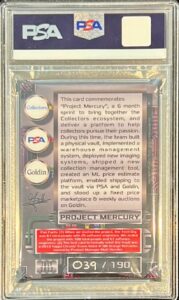

Fun fact: We commemorated our successful Project Mercury launch by gifting the team with special Mercury memorabilia. Here is one I got, my first card that kick-started my journey as a collector!

Kanwar Kohli

Kanwar Kohli is the Director of Platform Engineering at Collectors. He has extensive experience in engineering management, cloud technologies, and building internal platforms working for various Fortune 500 companies and startups. With many interests, Kanwar enjoys reading books, traveling, watching sports, and a new pastime collecting sports cards.